Importing BigQuery table as Pandas DataFrame

Start your free 7-days trial now!

To import a BigQuery table as a DataFrame, Pandas offer a built-in method called read_gbq that takes in as argument a query string (e.g. SELECT * FROM users;) as well as a path to the JSON credential file for authentication. Let's first go through the steps on creating this credential file!

Creating a service account for authentication

Before we can import our BigQuery table, we must first create a service account for authentication. We can think of service accounts as users who can be assigned certain privileges to interact with Google Cloud Platform (GCP) products - including BigQuery.

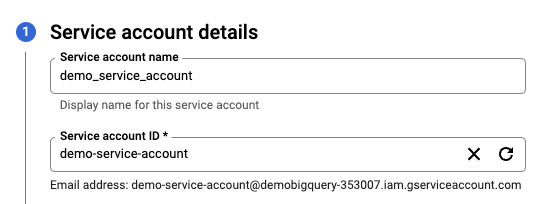

A service account can be created on the GCP console hereopen_in_new. Pick the project where your BigQuery data lives, and proceed to create the service account. There are 2 simple steps in total - the first is to give a name to the service account:

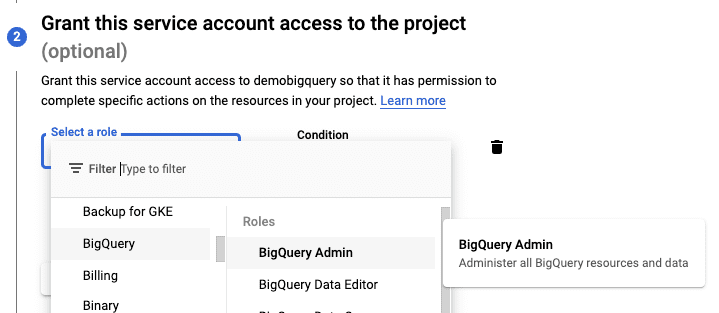

Next, we have to grant privileges to this service account:

For demonstration sake, we will assign the highest BigQuery Admin privilege, but do keep in mind that we should always assign just the right level of privileges in a production setting for better security.

We can skip the third optional step and finish creating the service account.

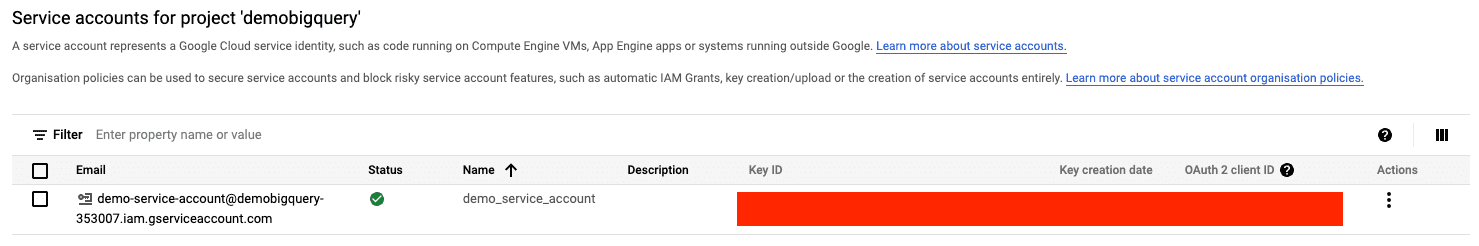

Once this step is done, we should see our new service account like so:

Generating a JSON credential file

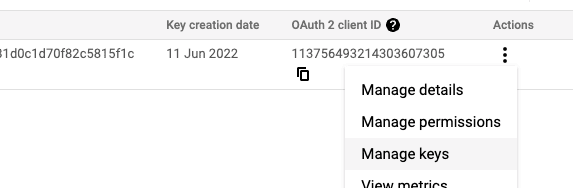

Now that the service account is created, we need to generate a JSON credential file to pass to Pandas. To do so, click on the three dots under Actions, and click on Manage keys:

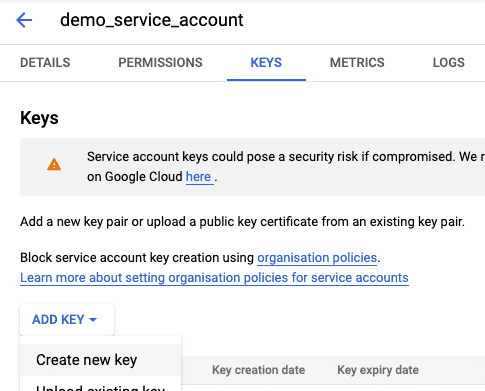

Next, click on ADD KEY, and then Create new key:

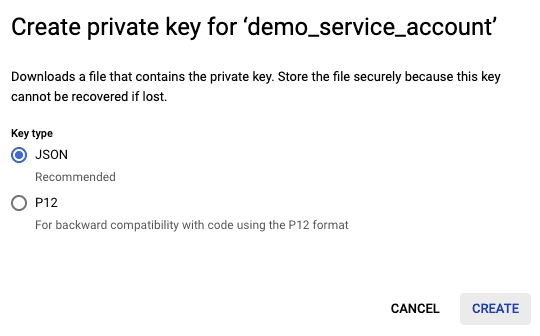

Finally, select JSON and then click CREATE:

This will download the private key, which is a JSON file that holds all the credentials required for authentication. Make sure to not share this JSON file publicly (e.g. on GitHub repository) because others can gain access to your BigQuery data and wreak havoc.

Importing BigQuery table as a Pandas DataFrame

Now that we have the JSON credential file, we can use Pandas' read_gbq(~) method to read some data from our BigQuery table as a Pandas DataFrame:

import pandas as pdfrom google.cloud import bigqueryfrom google.oauth2 import service_account

credentials = service_account.Credentials.from_service_account_file( './demobigquery-353007-1fb4e20ef41a.json', scopes=['https://www.googleapis.com/auth/cloud-platform'],)query_string = 'SELECT * FROM `demobigquery-353007.forum.users`;'df = pd.read_gbq(query_string, credentials=credentials)

name age0 Cathy 401 Alex 202 Bob 30

Here, note the following:

for the code to run, you may have to install the

pandas-gbqlibrary viapiporconda.the

./demobigquery-353007-1fb4e20ef41a.jsoncredential file created in previous step lives in the same directory as this Python script.demobigquery-353007is the ID of our GCP project - you can find this information by logging into the GCP console.the

foruminforum.usersis the dataset name (or sometimes referred to as the schema), andusersis the name of our table.

If you want to create this BigQuery table, please follow my "Getting started with BigQuery" guide.

Importing a public BigQuery dataset

BigQuery has a number of datasets available for public access such as data from Stack Overflow and Hacker News.

For instance, Stack Overflow's data lives in the following:

Project ID: bigquery-public-dataDataset: stackoverflowTable: post_questions

Therefore, to access some records from this BigQuery table:

query_string = """SELECT id, titleFROM `bigquery-public-data.stackoverflow.posts_questions`LIMIT 100"""df = pd.read_gbq(query_string, credentials=credentials)

id title0 68922691 Vue 3 + Module Federation1 68923973 Replace newline \n with expression using sed (...2 68926624 Wait_For_Component is not working in cogs [dis...3 68979195 How to merge date and time from two separate c...4 68997505 Slick Slider is not working on the website?