Getting started with BigQuery

Start your free 7-days trial now!

What is BigQuery?

BigQuery is Google's fully managed solution of storing and analyzing big data at the scale of petabytes. One of the most remarkable features of BigQuery is that the underlying infrastructure is managed entirely by Google. BigQuery can be thought of as a scalable SQL server - we can fetch and manipulate data using SQL queries.

The goal of this guide is to get our hands dirty and familiarise ourselves with the BigQuery environment. We will create a dummy table and perform some simple queries against it in BigQuery.

Setting up GCP project and enabling the BigQuery API

We first need to go through the following steps before we can start using BigQuery:

create a GCP (Google Cloud Platform) project, which can be done hereopen_in_new. You need to enable billing to start using BigQuery.

enable the BigQuery API, which can be done hereopen_in_new.

BigQuery requires your GCP project to have enabled billing, and so you would need to supply credit card details. If you're a new user, then you get $300 USD worth of free credit that expires in 90 days. BigQuery does come with a generous free-tieropen_in_new so the operations that you perform in this guide should be covered by the free-tier.

GCP also provides the BigQuery sandbox, which is an easy way to try out BigQuery without supplying credit card details. However, the sandbox does not allow for Data manipulation language (DML) statements, that is, we cannot invoke statements such as INSERT, UPDATE and DELETE. For this guide, we will be using these DML statements, and so the sandbox does not meet our needs.

What are projects, datasets and tables in BigQuery?

BigQuery organises database objects as:

Projects > Datasets > Tables

Under a single GCP project, multiple datasets can be created, under which multiple tables can be created. Datasets can be thought of as schemas, and can be created using CREATE SCEHMA. Also, tables belonging to different datasets can be still be joined.

Running our first BigQuery SQL script

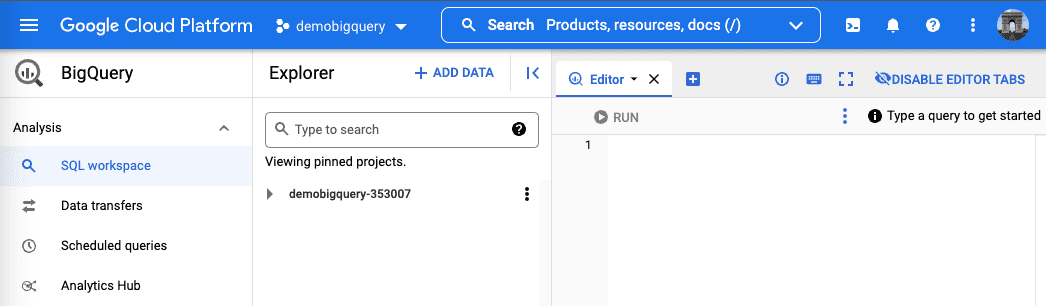

Once we have set up our GCP project and enabled the BigQuery API, we will have access to the BigQuery SQL editor:

In this editor, we will be able to run SQL statements against our BigQuery database.

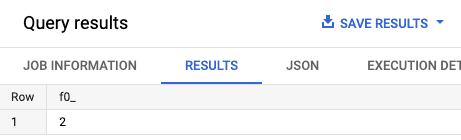

Let's try computing 1+1:

SELECT 1 + 1;

Click on the RUN button and we should see the results panel pop up at the bottom:

🎉 Congratulations - you've just written your first BigQuery SQL script!

Creating a table using SQL in BigQuery

To create a table, we first need to create a dataset:

CREATE SCHEMA forum;

Here, we've created a dataset called forum.

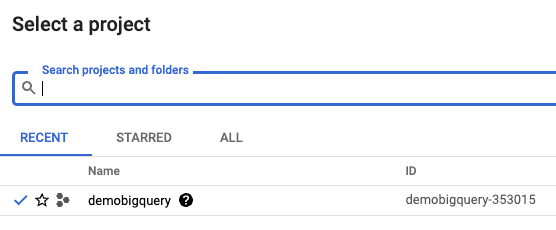

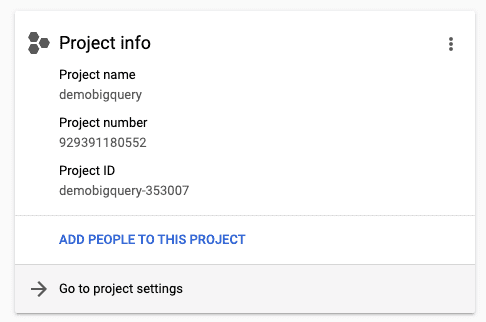

We also need to know our GCP project ID, which can be obtained by clicking on our project in the header:

We should see a list of projects and their IDs:

Copy the project ID because we need this information when creating tables.

To create an empty users table:

CREATE TABLE IF NOT EXISTS `demobigquery-353007.forum.users` ( name STRING NOT NULL, age INT,);

Here, note the following

demobigquery-353007is our project ID,forumis the name of the dataset andusersis the name of the table.we must use

`instead of apostrophes to refer to table names that include the project ID.

Unlike traditional SQL systems like PostgreSQL, BigQuery has no notion of primary keys and indexes, and instead uses columnnar as the storage method to greatly compress our data. However, similar to traditional SQL systems, the queries that we execute will be optimized automatically even without an index by BigQuery's internal query planner.

Inserting rows into a BigQuery table

To insert rows into the users table:

INSERT INTO forum.users(name, age)VALUES('Alex', 20),('Bob', 30),('Cathy', 40);

Notice how we need to include the dataset name (forum) when referring to the table.

Selecting rows from a BigQuery table

Now that we've inserted some rows into the users table, let's use the SELECT statement to print the rows of our table:

SELECT * FROM `forum.users`;

Row name age 1 Alex 202 Bob 303 Cathy 40

Notice how simple queries like this take a few seconds to complete. This is because BigQuery was designed to handle big data efficiently. While the processing time for small amounts of data may be slower in BigQuery than it is in standard SQL servers (e.g. PostgreSQL), BigQuery can process large datasets much faster.

Performing a simple query in BigQuery

To get all users whose age is strictly greater than 25:

SELECT * FROM forum.users WHERE age > 25;

Row name age 1 Cathy 402 Bob 30

Updating values in BigQuery

Updating values based on condition in BigQuery

By default, BigQuery only allows updating values with a WHERE clause:

UPDATE forum.users SET name = upper(name) WHERE name = 'Alex';SELECT * FROM forum.users;

Row name age 1 ALEX 202 Cathy 403 Bob 30

The idea is that by having to add the WHERE clause, we can avoid inadvertently updating all values.

Updating all values in BigQuery

To update all values, use WHERE TRUE:

UPDATE forum.users SET name = upper(name) WHERE TRUE;SELECT * FROM forum.users;

Row name age 1 ALEX 202 CATHY 403 BOB 30

Deleting rows in BigQuery

Deleting rows based on condition in BigQuery table

To delete rows from a table, use the DELETE statement:

DELETE forum.users WHERE age < 25;SELECT * FROM forum.users;

Row name age 1 Bob 302 Cathy 40

Note that the DELETE statement requires the WHERE clause just like for UPDATE.

Deleting all rows from BigQuery table

To delete all rows in a table, use the TRUNCATE statement:

TRUNCATE TABLE forum.users;

Note that the forum.users table still exist and is an empty table with no zeros - meta information such as the column labels and types are not removed.

Dropping BigQuery table

To drop an entire table:

DROP TABLE forum.users;

Unlike TRUNCATE, the DROP TABLE removes the table as well as its meta-information.

Deleting the GCP project

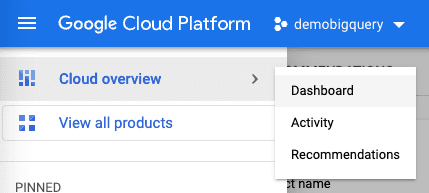

We highly recommend that you delete the GCP project once you're done experimenting to ensure that you will not be charged by the BigQuery service. To delete this GCP project, click on Cloud overview in the left side bar, and then click on Dashboard:

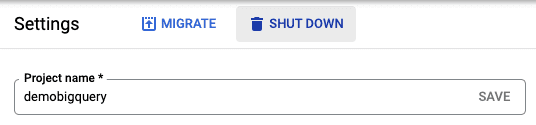

Next click on Go to project settings:

Finally, click on SHUT DOWN and follow the displayed steps: