Getting Started with Google Cloud Storage

Start your free 7-days trial now!

What is Google Cloud Storage?

Google Cloud Storage (GCS) is a service offered by Google Cloud Platform (GCP) for storing files - just like Google Drive. We can upload, replace, fetch and delete files using GCS's intuitive API, which is available in all mainstream programming languages such as Python and also on the command line. As with most services offered in GCP, GCS is highly scalable and is equipped with security features to govern who can access the files and also what they can do with the files.

How is Google Cloud Storage different from Google Drive?

Both Google Cloud Storage and Google Drive are used to store files, but here are some key differences between the two services:

GCS is part of GCP while Google Drive is not. This means that GCS can be easily integrated with other GCP services such as App Engine.

Google Drive is designed for personal use while GCS can be used in all contexts. For instance, at SkyTowner, we use GCS to host all the images!

there are far more configuration settings available for GCS than on Google Drive. For instance, we can choose to store files in specific geographic regions (e.g.

ASIA,US), and even the storage class (e.g.COLDLINEfor infrequently accessed data).GCS is a paid service and you will be billed according to several factors such as the amount of data you store, the operations you perform (e.g. inserting and reading files), the consumed network bandwith and so on.

Creating a GCP project

Since GCS is a part of GCP, we must first create a GCP project. To do so, please follow our short guide here.

Setting up Google Cloud Storage

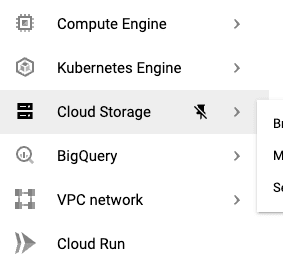

Head over to the GCP console, and on the left sidebar, click on Cloud Storage:

This should bring you to the GCS console.

What are buckets and how do we create them?

The files that we will upload will be stored inside buckets. Buckets are similar to directories (folders), but the key difference between them is that, unlike directories, buckets cannot be nested, that is, we cannot have buckets inside buckets. Let's now create a bucket on the Cloud Storage console so that we can upload some files later.

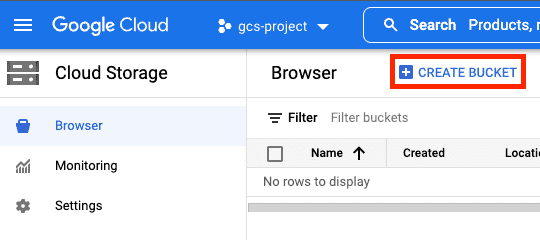

To create a bucket, click on CREATE BUCKET:

The following is some must-know information about buckets:

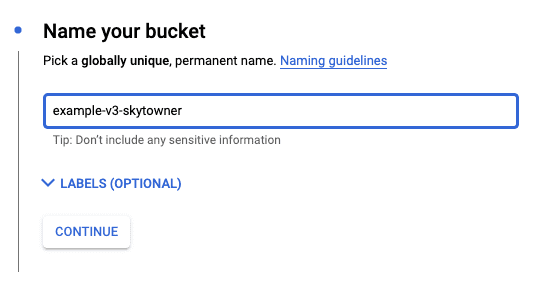

bucket names must be globally unique, which means that the name must not clash with any bucket name that is currently in use by any user.

we can set permission levels on buckets. For instance, we can make buckets public such that all the files within the bucket will be publicly available. Note that buckets are private by default - the permission levels can be configured later.

the bucket location type, storage class and name are all permanent and cannot be changed later.

Now, fill in the desired bucket name:

If you wish, you can configure the following settings for the bucket:

location typelink (either

multi-region(default),dual-regionorregion)storage classlink (either

STANDARD(default),NEARLINE,COLDLINE,ARCHIVE)access controllink (either ACL or uniform bucket-level access (default))

object protection (e.g. object versioning (none by default))

To use the default settings, you can click on the CREATE button at the bottom.

What are objects and how do we create them?

In the official documentation, the words objects and blobs are often used - these can simply be thought of as files. GCS objects therefore belong to buckets.

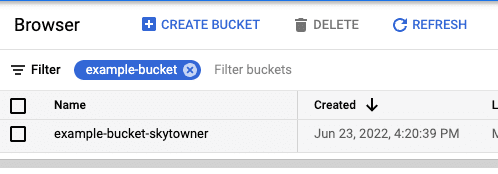

To create an object, click on the bucket that we created in the above step:

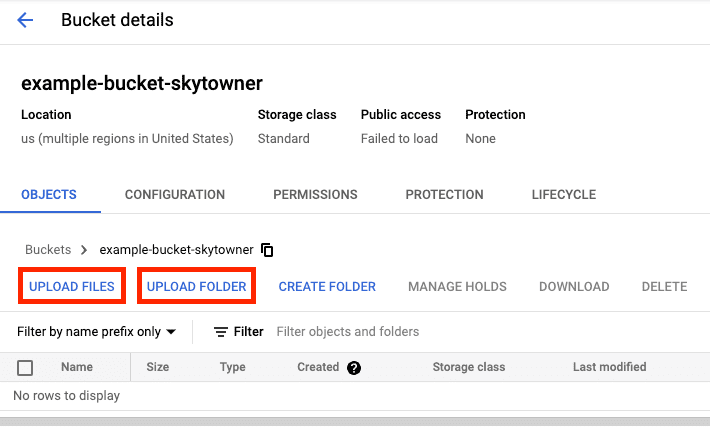

We can either upload a file or a folder by clicking on the following buttons:

Folders do not exist in Google Cloud Storage

One of the biggest misconceptions in GCS is folders. GCS does not provide official support for folders and all files are stored at the root level, meaning the file system is flat rather than hierarchical. However, because working with folders is convenient, GCS emulates the concept of folders by using some delimiter, most commonly /.

For instance, we can emulate the behavior of a folder by calling our file /images/cat.png - this makes it seem as if the cat.png file is within the images folder. Of course, cat.png does not actually reside within the images folder, that is, the name of the file is just called /images/cat.png. What's neat is that GCS allows us to search for files using a prefix-search, meaning we can search for all files that begin with /images/, which is similar to the task of finding all files within the images folder.

The fact that folders are not supported in GCS limits the type of operations we can perform in GCS. For instance, suppose we want to fetch all the files that are directly under folder_1 in the following scenario:

📁 folder_1 cat.png 📁 folder_2 dog.png

There is no way of getting just the direct files under folder_1 because only prefix-search is implemented in GCS. To get the files under folder_1, we can search for files that begin with folder_1/, which will return the following files:

folder_1/cat.pngfolder_1/folder_2/folder_1/folder_2/dog.png

As we can see, GCS will naively return all the files that begin with folder_1/ including files that are not immediately under folder_1/ like dog.png.

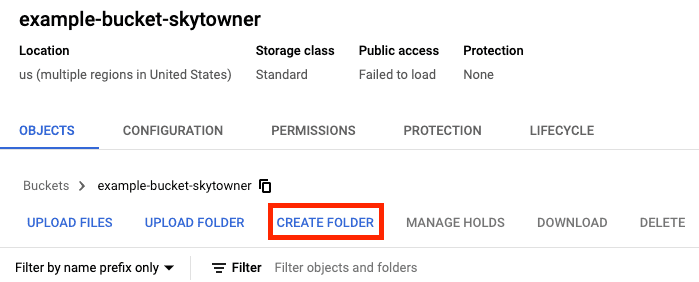

There is an option to CREATE FOLDER on the GCS web console, but this is somewhat misleading since, as discussed, folders do not exist in GCS:

Let's try creating a folder called images:

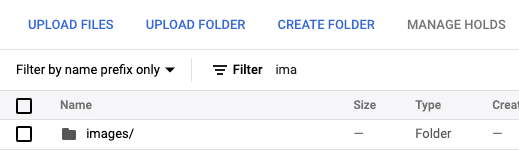

This is actually a dummy folder and is made only for your convenience. If you click on images/, GCS will search and return all files whose names begin with images/. When we upload a file under images/, the file name would automatically be prefixed with images/. For instance, uploading a file called cat.png under images/ will make the file name images/cat.png.

Storage class of buckets

When creating buckets, we can specify their storage class: STANDARD, NEARLINE, COLDLINE and ARCHIVE:

Storage class | Minimum storage duration | Type of files to store | Class A operations (per 10,000 operations) | Class B operations (per 10,000 operations) | Storage fee (per GB per month) | Retrieval fee (per GB) |

|---|---|---|---|---|---|---|

| None | Frequently accessed | $0.05 | $0.004 | $0.02 | $0 |

| 30 days | Infrequently accessed | $0.10 | $0.01 | $0.01 | $0.01 |

| 90 days | Rarely accessed | $0.10 | $0.05 | $0.004 | $0.02 |

| 365 days | Rarely accessed | $0.50 | $0.50 | $0.0012 | $0.05 |

Here, note the following:

the pricing shown here is for a single region

us-central1- the exact figures depend on the chosen region of the files and the location typelink.generally speaking, class A operations refer to write operations like insert, update and copy, whereas class B operations refer to read operations like fetch. Class A operations are more expensive than class B operations.

as can be deduced from the pricing, the characteristic of the storage class

ARCHIVEis that the storage fee is lower, but the operations fee (e.g. update, insert, delete, fetch) is high. In contrast, the storage classSTANDARD(which is the default) has a high storage fee while low operations fee. This means that we should useSTANDARDwhen we interact with our files often, while we should opt forARCHIVEwhen we want to simply store the files without interacting with them. The storage classesNEARLINEandCOLDLINEare just the middle ground betweenSTANDARDandARCHIVE.the minimum storage duration, as GCP calls it, is the minimum number of days that each object will be charged for in terms of storage fee. For instance, if an object is stored inside a

NEARLINEbucket for 15 days, which is lower than the minimum storage duration ofNEARLINE(30 days), the storage fee of this object would be as if the object has been stored for 30 days. Of course, if the object is stored for longer than the minimum storage duration, say 45 days for aNEARLINEobject, then you would have to pay 45 days worth of storage fee.retrieval fees are charged for operations such as reading, copying, moving, and rewriting. These are additional fees on top of class A and B operations, and so interacting with files stored in storage classes other than

STANDARDcan become costly.

For more detailed pricing information, please consult the official docs hereopen_in_new.

Location type of buckets

There are 3 different location types to choose from when creating buckets:

Multi-region refers to a large geographic place (e.g. United States, Asia) that contains multiple regions. Our files will be stored in multiple regions, which has two main advantages:

lower latency - files can be fetched closer to the users

high availability - if one region fails due to a power outage for instance, then our files can still be served from the other regions.

Dual-region refers to a certain pair of regions (e.g. Osaka and Tokyo)

Region refers to a specific region (e.g. Tokyo)

Here are some considrations when picking location types and regions for GCS buckets:

generally, multi-region is recommended since it offers the highest availability of our files and also benefits from lower latency since files can be fetched from closer geographical regions.

if you are using other services such as App Engine to host a server, then you may wish to pick the same region for the bucket as the server to reduce the latency between the two services.

if your application is primarily targeted to some region, say for Japan, then it would make sense to use dual-region and select Osaka and Tokyo. This will ensure low-latency for your users.

in terms of pricing, multi-region is more expensive than region because data has to be replicated across multiple regions.

Access control of buckets and objects

Here, we will give you a brief overview of how we can control who can access the files as well as what they can do with the files on GCS. Please consult our guide here for a more detailed discussion.

There are two different ways to control the authorization scheme for objects and buckets on GCS:

ACL (access control list) allows us to set permissions on each bucket as well as on each object.

Uniform bucket-level access allows us to set permission on each bucket only. All the objects within the bucket will inherit the permissions set on the bucket.

We must either pick ACL or uniform bucket-level access - we cannot use them both at the same time. Generally, the uniform bucket-level access is recommended to avoid micro-management. Again, please visit our guide for a more detailed comparison between the two approaches.

There is also the option Prevent Public Access, which is separate from ACL and uniform bucket-level access. If Prevent Public Access is enabled on the bucket, then all public requests to any file within the bucket will always be denied regardless of the settings in either your ACL or uniform bucket-level access. Prevent Public Access can be enabled or disabled at any time.

Next steps

You can check out our GCS Python cookbook to get your hands dirty and familiarize yourself with the GCS environment using the Python client API 🚀!