Before we dive into the Gram-Schmidt process, let's go through a quick example that demonstrates how we can construct an orthogonal basis given any two basis vectors for $\mathbb{R}^2$.

Example.

Constructing an orthogonal basis using projections

Consider the following vectors:

$$\boldsymbol{x}_1=

\begin{pmatrix}

1\\2

\end{pmatrix},\;\;\;\;\;

\boldsymbol{x}_2=

\begin{pmatrix}2\\2

\end{pmatrix}$$

Construct an orthogonal basis for $\mathbb{R}^2$ using $\boldsymbol{w}_1$ and $\boldsymbol{w}_2$.

Solution. Let's start by visualizing the two vectors:

We can clearly see that the two vectors are not perpendicular to each other, which means that they do not form an orthogonal basis for $\mathbb{R}^2$. We can fix one vector, say $\boldsymbol{x}_1$, and modify the other vector $\boldsymbol{x}_2$ such that $\boldsymbol{x}_2$ becomes perpendicular to $\boldsymbol{x}_1$.

Let's set $\boldsymbol{v}_1=\boldsymbol{x}_1$ and our goal is to find $\boldsymbol{v}_2$ where $\boldsymbol{v}_2$ is orthogonal to $\boldsymbol{v}_1$. Recall that $\boldsymbol{v}_2$ can be constructed by projecting $\boldsymbol{x}_2$ onto $\boldsymbol{v}_1$ as shown below:

From the geometric interpretationlink of vector addition, we have the following relationship:

$$\begin{equation}\label{eq:PsFQHOCQvWAd02tK9IA}

\boldsymbol{v}_2=

\boldsymbol{x}_2-\mathrm{Proj}_{L}(\boldsymbol{x}_2)

\end{equation}$$

From theorem, we know that:

$$\begin{equation}\label{eq:WnFx9a9ZbZWJGQEa9UF}

\text{Proj}_L(\boldsymbol{x}_2)=

\frac{\boldsymbol{x}_2\cdot\boldsymbol{v}_1}

{\boldsymbol{v}_1\cdot\boldsymbol{v}_1}\boldsymbol{v}_1

\end{equation}$$

Substituting \eqref{eq:WnFx9a9ZbZWJGQEa9UF} into \eqref{eq:PsFQHOCQvWAd02tK9IA} gives:

$$\begin{align*}

\boldsymbol{v}_2&=

\boldsymbol{x}_2-

\frac{\boldsymbol{x}_2\cdot\boldsymbol{v}_1}

{\boldsymbol{v}_1\cdot\boldsymbol{v}_1}\boldsymbol{v}_1\\

&=

\begin{pmatrix}4\\2\end{pmatrix}-

\frac{(4)(1)+(2)(5)}

{(1)(1)+(5)(5)}\begin{pmatrix}1\\5\end{pmatrix}\\

&=

\begin{pmatrix}4\\2\end{pmatrix}-

\frac{14}

{27}\begin{pmatrix}1\\5\end{pmatrix}\\

&\approx

\begin{pmatrix}3.5\\-0.5\end{pmatrix}

\end{align*}$$

From our diagram, this looks to be correct! Therefore, an orthogonal basis for $\mathbb{R}^2$ is:

$$\boldsymbol{v}_1=

\begin{pmatrix}

1\\2

\end{pmatrix},\;\;\;\;\;

\boldsymbol{v}_2=

\begin{pmatrix}3.5\\-0.5

\end{pmatrix}$$

■

The Gram-Schmidt process is a generalization of what we just performed to higher dimensions. To derive the Gram-Schmidt process, we require the following theorem.

Theorem.

Expressing the projection vector using an orthogonal basis

Let $W$ be a finite-dimensional subspace of a vector space $V$. If $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ is an orthogonal basis for $W$ and $\boldsymbol{v}$ is any vector in $V$, then:

$$\mathrm{Proj}_{W}(\boldsymbol{v})=

\frac{\boldsymbol{v}\cdot\boldsymbol{v}_1}{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1

+

\frac{\boldsymbol{v}\cdot\boldsymbol{v}_2}{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2

+

\cdots

+

\frac{\boldsymbol{v}\cdot\boldsymbol{v}_r}{\Vert\boldsymbol{v}_r\Vert^2}\boldsymbol{v}_r

$$

If $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ is an orthonormal basislink for $W$, then:

$$\mathrm{Proj}_{W}(\boldsymbol{v})=

(\boldsymbol{v}\cdot\boldsymbol{v}_1)\boldsymbol{v}_1

+

(\boldsymbol{v}\cdot\boldsymbol{v}_2)\boldsymbol{v}_2

+

\cdots

+

(\boldsymbol{v}\cdot\boldsymbol{v}_r)\boldsymbol{v}_r

$$

Proof. Let $W$ be a finite-dimensional subspace of a vector space $V$ and let $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ be an orthogonal basis for $W$. From theoremlink, we know that any vector $\boldsymbol{v}\in{V}$ can be expressed as a sum of two vectors that are orthogonal to each other, that is, $\boldsymbol{v}=\boldsymbol{w}_1+\boldsymbol{w}_2$ where $\boldsymbol{w}_1\in{W}$ and $\boldsymbol{w}_2\in{W}^\perp$. Let $\boldsymbol{w}_1=\mathrm{Proj}_W(\boldsymbol{v})$. By theoremlink, $\boldsymbol{w}_1$ can be expressed as:

$$\begin{equation}\label{eq:htY2XMEdsQyceWOhHP7}

\boldsymbol{w}_1=

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_1}{\Vert{\boldsymbol{v}_1}\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_2}{\Vert{\boldsymbol{v}_2}\Vert^2}\boldsymbol{v}_2+

\cdots+

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_r}{\Vert{\boldsymbol{v}_r}\Vert^2}\boldsymbol{v}_r

\end{equation}$$

Since $\boldsymbol{w}_2$ is orthogonal to the subspace $W$, we have that $\boldsymbol{w}_2$ is orthogonal to every vector of $W$, including the basis vectors $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ of $W$. By theoremlink, the dot product of two perpendicular vectors is zero:

$$\begin{gather*}

\boldsymbol{w}_2\cdot\boldsymbol{v}_1=0\\

\boldsymbol{w}_2\cdot\boldsymbol{v}_2=0\\

\vdots\\

\boldsymbol{w}_2\cdot\boldsymbol{v}_r=0\\

\end{gather*}$$

Let's modify \eqref{eq:htY2XMEdsQyceWOhHP7} like so:

$$\begin{align*}

\boldsymbol{w}_1&=

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_1+\boldsymbol{w}_2\cdot\boldsymbol{v}_1}{\Vert{\boldsymbol{v}_1}\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_2+\boldsymbol{w}_2\cdot\boldsymbol{v}_2}{\Vert{\boldsymbol{v}_2}\Vert^2}\boldsymbol{v}_2+

\cdots+

\frac{\boldsymbol{w}_1\cdot\boldsymbol{v}_r+\boldsymbol{w}_2\cdot\boldsymbol{v}_r}{\Vert{\boldsymbol{v}_r}\Vert^2}\boldsymbol{v}_r\\

&=\frac{(\boldsymbol{w}_1+\boldsymbol{w}_2)\cdot\boldsymbol{v}_1}{\Vert{\boldsymbol{v}_1}\Vert^2}\boldsymbol{v}_1+

\frac{(\boldsymbol{w}_1+\boldsymbol{w}_2)\cdot\boldsymbol{v}_2}{\Vert{\boldsymbol{v}_2}\Vert^2}\boldsymbol{v}_2+

\cdots+

\frac{(\boldsymbol{w}_1+\boldsymbol{w}_2)\cdot\boldsymbol{v}_r}{\Vert{\boldsymbol{v}_r}\Vert^2}\boldsymbol{v}_r\\

\end{align*}$$

Now, because $\boldsymbol{v}=\boldsymbol{w}_1+\boldsymbol{w}_2$, this reduces to:

$$\begin{equation}\label{eq:PCh2udaAc6cnzmwjYVC}

\boldsymbol{w}_1

=\frac{\boldsymbol{v}\cdot\boldsymbol{v}_1}{\Vert{\boldsymbol{v}_1}\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{v}\cdot\boldsymbol{v}_2}{\Vert{\boldsymbol{v}_2}\Vert^2}\boldsymbol{v}_2+

\cdots+

\frac{\boldsymbol{v}\cdot\boldsymbol{v}_r}{\Vert{\boldsymbol{v}_r}\Vert^2}\boldsymbol{v}_r\\

\end{equation}$$

Note that if $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_n\}$ is an orthonormal basis, then the length of each basis vector is one. Therefore, \eqref{eq:PCh2udaAc6cnzmwjYVC} reduces to:

$$\boldsymbol{w}_1

=(\boldsymbol{v}\cdot\boldsymbol{v}_1)\boldsymbol{v}_1+

(\boldsymbol{v}\cdot\boldsymbol{v}_2)\boldsymbol{v}_2+

\cdots+

(\boldsymbol{v}\cdot\boldsymbol{v}_r)\boldsymbol{v}_r$$

This completes the proof.

■

Theorem.

Gram-Schmidt process

Given a basis $\{\boldsymbol{w}_1,\boldsymbol{w}_2,\cdots,\boldsymbol{w}_r\}$ for the subspace $W$ of $\mathbb{R}^n$, we can construct an orthogonal basis $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ for $W$ where:

$$\begin{align*}

\boldsymbol{v}_1&=\boldsymbol{w}_1\\

\boldsymbol{v}_2

&=\boldsymbol{w}_2-

\frac{\boldsymbol{w}_2\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1\\

\boldsymbol{v}_3&=\boldsymbol{w}_3-

\Big(\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_2}{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2\Big)\\

\boldsymbol{v}_4&=\boldsymbol{w}_4-

\Big(\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_3}

{\Vert\boldsymbol{v}_3\Vert^2}\boldsymbol{v}_3

\Big)\\\\

&\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\vdots\\\\

\boldsymbol{v}_r&=\boldsymbol{w}_r-

\Big(\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_3}

{\Vert\boldsymbol{v}_3\Vert^2}\boldsymbol{v}_3+\cdots+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_{r-1}}

{\Vert\boldsymbol{v}_{r-1}\Vert^2}\boldsymbol{v}_{r-1}

\Big)

\end{align*}$$

Note that we can easily convert any orthogonal basis into an orthonormal basis using theoremlink.

Proof. Let $W$ be any subspace for a vector space $V$. Let the basis for $W$ be $\{\boldsymbol{w}_1,\boldsymbol{w}_2,\cdots,\boldsymbol{w}_r\}$.

We first let $\boldsymbol{v}_1=\boldsymbol{w}_1$. Let $W_1$ be the subspace spanned by $\boldsymbol{w}_1$, which means that $\{\boldsymbol{w}_1\}$ is the basis for $W_1$. Since $W_1$ is spanned by a single basis vector, $W_1$ is simply a line. We can construct $\boldsymbol{v}_2$ that is orthogonal to $\boldsymbol{v}_1$ using $\boldsymbol{w}_2$ like so:

$$\begin{equation}\label{eq:DlntcNN5PCsJKfkc7Sz}

\boldsymbol{v}_2=

\boldsymbol{w}_2-\mathrm{Proj}_{W_1}(\boldsymbol{w}_2)

\end{equation}$$

Visually, the relationship between the vectors is:

By theoremlink, we can express the projection as:

$$\begin{equation}\label{eq:NOHA67ECOblfxSXhX0m}

\mathrm{Proj}_{W_1}(\boldsymbol{w}_2)=

\frac{\boldsymbol{w}_2\cdot\boldsymbol{v}_1}{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1

\end{equation}$$

Substituting \eqref{eq:NOHA67ECOblfxSXhX0m} into \eqref{eq:DlntcNN5PCsJKfkc7Sz} gives:

$$\boldsymbol{v}_2

=\boldsymbol{w}_2-

\frac{\boldsymbol{w}_2\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1$$

Great, we've managed to find $\boldsymbol{v}_2$ that is orthogonal to $\boldsymbol{v}_1$. Next, to construct $\boldsymbol{v}_3$ that is orthogonal to both $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$ using $\boldsymbol{w}_3$, we find a vector orthogonal to the subspace $W_2$ spanned by $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$. Since $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$ are linearly independent and span $W_2$, they form a basis for $W_2$. Note that, unlike $W_1$ which traced a line, $W_2$ is a flat plane.

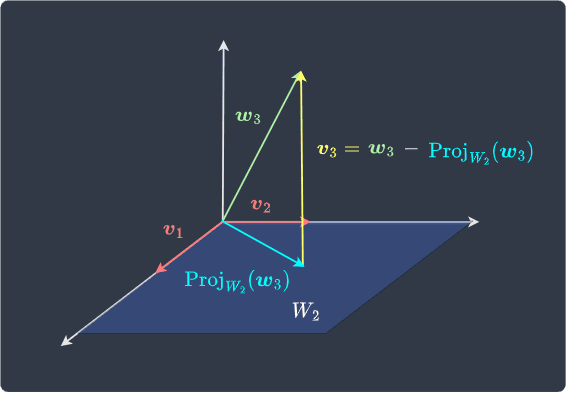

Now, $\boldsymbol{v}_3$ can be computed by:

$$\begin{equation}\label{eq:C263WvfQPmXAvC5J3zj}

\boldsymbol{v}_3=

\boldsymbol{w}_3-\mathrm{Proj}_{W_2}(\boldsymbol{w}_3)

\end{equation}$$

Visually, the relationship between the vectors is:

By theoremlink, because $\{\boldsymbol{v}_1,\boldsymbol{v}_2\}$ is a basis for $W_2$, we know that $\mathrm{Proj}_{W_2}(\boldsymbol{w}_3)\in{W_2}$ can be written as:

$$\mathrm{Proj}_{W_2}(\boldsymbol{w}_3)=

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2$$

Therefore, \eqref{eq:C263WvfQPmXAvC5J3zj} becomes:

$$\begin{align*}

\boldsymbol{v}_3

&=\boldsymbol{w}_3-

\Big(\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2

\Big)

\end{align*}$$

We've now managed to find three vectors $\boldsymbol{v}_1$, $\boldsymbol{v}_2$ and $\boldsymbol{v}_3$ that are orthogonal! Now, $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\boldsymbol{v}_3\}$ form a basis for the subspace $W_3$. Similarly, to construct $\boldsymbol{v}_4$ that is orthogonal to these vectors using $\boldsymbol{w}_4$, we compute:

$$\begin{align*}

\boldsymbol{v}_4

&=\boldsymbol{w}_4-\mathrm{Proj}_{W_3}(\boldsymbol{w}_4)\\

&=\boldsymbol{w}_4-

\Big(\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_3}

{\Vert\boldsymbol{v}_3\Vert^2}\boldsymbol{v}_3

\Big)

\end{align*}$$

We keep on repeating this process until we end up with an orthogonal basis for $W$. Remember, if $W$ originally had $r$ basis vectors, then we must continue this process until we get $r$ orthogonal basis vectors. This completes the proof.

■

Example.

Constructing an orthogonal basis using the Gram-Schmidt process

Consider the basis $\{\boldsymbol{w}_1,\boldsymbol{w}_2,\boldsymbol{w}_3\}$ for $\mathbb{R}^3$ where:

$$\boldsymbol{w}_1=

\begin{pmatrix}

1\\1\\0

\end{pmatrix},\;\;\;\;\;

\boldsymbol{w}_2=

\begin{pmatrix}

1\\0\\1

\end{pmatrix},\;\;\;\;\;

\boldsymbol{w}_3=

\begin{pmatrix}

0\\1\\1

\end{pmatrix}$$

Use the Gram-Schmidt process to construct an orthogonal basis for $\mathbb{R}^3$.

Solution. Let $\boldsymbol{v}_1=\boldsymbol{w}_1$. Next, $\boldsymbol{v}_2$, which is orthogonal to $\boldsymbol{v}_1$, can be constructed by:

$$\begin{align*}

\boldsymbol{v}_2

&=\boldsymbol{w}_2-

\frac{\boldsymbol{w}_2\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1\\

&=\begin{pmatrix}1\\0\\1\end{pmatrix}-

\frac{(1)(1)+(0)(1)+(1)(0)}

{(1)^2+(1)^2+(0)^2}\begin{pmatrix}1\\1\\0\end{pmatrix}\\

&=\begin{pmatrix}1\\0\\1\end{pmatrix}-

\frac{1}

{2}\begin{pmatrix}1\\1\\0\end{pmatrix}\\

&=\begin{pmatrix}0.5\\-0.5\\1\end{pmatrix}

\end{align*}$$

Finally, $\boldsymbol{v}_3$, which is orthogonal to both $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$, can be constructed by:

$$\begin{align*}

\boldsymbol{v}_3&=\boldsymbol{w}_3-

\Big(\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_2}{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2\Big)\\

&=\begin{pmatrix}0\\1\\1\end{pmatrix}-

\left(\frac{(0)(1)+(1)(1)+(1)(0)}{(1)^2+(1)^2+(0)^2}\begin{pmatrix}1\\1\\0\end{pmatrix}

+\frac{(0)(0.5)+(1)(-0.5)+(1)(1)}{(0.5)^2+(-0.5)^2+(1)^2}\begin{pmatrix}0.5\\-0.5\\1\end{pmatrix}\right)\\

&=\begin{pmatrix}0\\1\\1\end{pmatrix}-

\left(\frac{1}{2}\begin{pmatrix}1\\1\\0\end{pmatrix}

+\frac{0.5}{1.5}\begin{pmatrix}0.5\\-0.5\\1\end{pmatrix}\right)\\

&=\begin{pmatrix}-2/3\\2/3\\2/3\end{pmatrix}

\end{align*}$$

By the Gram-Schmidt process, we have managed to construct an orthogonal basis $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\boldsymbol{v}_3\}$ for $\mathbb{R}^3$ where:

$$\boldsymbol{v}_1=

\begin{pmatrix}

1\\1\\0

\end{pmatrix},\;\;\;\;\;

\boldsymbol{v}_2=

\begin{pmatrix}

0.5\\-0.5\\1

\end{pmatrix},\;\;\;\;\;

\boldsymbol{v}_3=

\begin{pmatrix}

-2/3\\2/3\\2/3

\end{pmatrix}$$

Let's still confirm that $\boldsymbol{v}_1$, $\boldsymbol{v}_2$ and $\boldsymbol{v}_3$ are orthogonal:

$$\begin{align*}

\boldsymbol{v}_1\cdot\boldsymbol{v}_2&=

\begin{pmatrix}1\\1\\0\end{pmatrix}\cdot

\begin{pmatrix}0.5\\-0.5\\1\end{pmatrix}\\

&=(1)(0.5)+(1)(-0.5)+(0)(1)\\&=0\\\\

\boldsymbol{v}_1\cdot\boldsymbol{v}_3&=

\begin{pmatrix}1\\1\\0\end{pmatrix}\cdot

\begin{pmatrix}-2/3\\2/3\\2/3\end{pmatrix}\\

&=(1)(-2/3)+(1)(2/3)+(0)(2/3)\\\\

\boldsymbol{v}_2\cdot\boldsymbol{v}_3&=

\begin{pmatrix}0.5\\-0.5\\1\end{pmatrix}\cdot

\begin{pmatrix}-2/3\\2/3\\2/3\end{pmatrix}\\

&=(0.5)(-2/3)+(-0.5)(2/3)+(1)(2/3)\\

&=0

\end{align*}$$

Indeed, $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\boldsymbol{v}_3\}$ is an orthogonal basis for $\mathbb{R}^3$.

■

There are two ways to obtain an orthonormal basis using the Gram-Schmidt process:

in the end, convert each orthogonal basis vector into a unit vector by theoremlink.

at every step, convert the constructed orthogonal basis vector into a unit vector.

If we take the latter approach, the Gram-Schmidt process can be expressed as below.

Theorem.

Constructing an orthonormal basis using the Gram-Schmidt process

Given a basis $\{\boldsymbol{w}_1,

\boldsymbol{w}_2,\cdots,

\boldsymbol{w}_r\}$, we can construct an orthogonal basis $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ using the Gram-Schmidt process. If $\boldsymbol{q}_i$ is a unit vector of $\boldsymbol{v}_i$ for $i=1,2,\cdots,r$, then the equations for the Gram-Schmidt process can be simplified as:

$$\begin{align*}

\boldsymbol{v}_1&=\boldsymbol{w}_1\\

\boldsymbol{v}_2&=\boldsymbol{w}_2-

(\boldsymbol{w}_2\cdot\boldsymbol{q}_1)\boldsymbol{q}_1\\

\boldsymbol{v}_3&=\boldsymbol{w}_3-

\Big((\boldsymbol{w}_3\cdot\boldsymbol{q}_1)\boldsymbol{q}_1+

(\boldsymbol{w}_3\cdot\boldsymbol{q}_2)\boldsymbol{q}_2\Big)\\

\boldsymbol{v}_4&=\boldsymbol{w}_4-

\Big((\boldsymbol{w}_4\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_4\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+

(\boldsymbol{w}_4\cdot\boldsymbol{q}_3)\boldsymbol{q}_3

\Big)\\\\

&\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\vdots\\\\

\boldsymbol{v}_r&=\boldsymbol{w}_r-

\Big((\boldsymbol{w}_r\cdot\boldsymbol{q}_1)\boldsymbol{q}_1+

(\boldsymbol{w}_r\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+

(\boldsymbol{w}_r\cdot\boldsymbol{q}_3)\boldsymbol{q}_3+\cdots+

(\boldsymbol{w}_r\cdot\boldsymbol{q}_{r-1})

\boldsymbol{q}_{r-1}

\Big)

\end{align*}$$

Note that the set $\{\boldsymbol{q}_1,

\boldsymbol{q}_2,\cdots,

\boldsymbol{q}_r\}$ is an orthonormal basis by definitionlink.

Proof. Recall the Gram-Schmidt process used for constructing an orthogonal basis:

$$\begin{align*}

\boldsymbol{v}_1&=\boldsymbol{w}_1\\

\boldsymbol{v}_2

&=\boldsymbol{w}_2-

\frac{\boldsymbol{w}_2\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1\\

\boldsymbol{v}_3&=\boldsymbol{w}_3-

\Big(\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_3\cdot\boldsymbol{v}_2}{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2\Big)\\

\boldsymbol{v}_4&=\boldsymbol{w}_4-

\Big(\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2+

\frac{\boldsymbol{w}_4\cdot\boldsymbol{v}_3}

{\Vert\boldsymbol{v}_3\Vert^2}\boldsymbol{v}_3

\Big)\\\\

&\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\vdots\\\\

\boldsymbol{v}_r&=\boldsymbol{w}_r-

\Big(\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_1}

{\Vert\boldsymbol{v}_1\Vert^2}\boldsymbol{v}_1+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_2}

{\Vert\boldsymbol{v}_2\Vert^2}\boldsymbol{v}_2+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_3}

{\Vert\boldsymbol{v}_3\Vert^2}\boldsymbol{v}_3+\cdots+

\frac{\boldsymbol{w}_r\cdot\boldsymbol{v}_{r-1}}

{\Vert\boldsymbol{v}_{r-1}\Vert^2}\boldsymbol{v}_{r-1}

\Big)

\end{align*}$$

Where $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_r\}$ is an orthogonal basis. At step $1$, we can convert $\boldsymbol{v}_1$ into a unit vector $\boldsymbol{q}_1$ by using theoremlink. Because $\Vert\boldsymbol{q}_1\Vert=1$, step $2$ can now be simplified to:

$$\boldsymbol{v}_2=

\boldsymbol{w}_2-

(\boldsymbol{w}_2\cdot\boldsymbol{q}_1)\boldsymbol{q}_1$$

We now convert $\boldsymbol{v}_2$ into a unit vector $\boldsymbol{q}_2$, which gives us an orthonormal set $\{\boldsymbol{q}_1,

\boldsymbol{q}_2\}$. Step $3$ can be written as:

$$\boldsymbol{v}_3=

\boldsymbol{w}_3-

\Big((\boldsymbol{w}_3\cdot\boldsymbol{q}_1)\boldsymbol{q}_1+

(\boldsymbol{w}_3\cdot\boldsymbol{q}_2)\boldsymbol{q}_2\Big)$$

For step $r$, we have the following

$$\boldsymbol{v}_r=

\boldsymbol{w}_r-

\Big((\boldsymbol{w}_r\cdot\boldsymbol{q}_1)\boldsymbol{q}_1+

(

\boldsymbol{w}_r\cdot\boldsymbol{q}_2)\boldsymbol{q}_2

+\cdots+

(\boldsymbol{w}_r\cdot\boldsymbol{q}_{r-1})\boldsymbol{q}_{r-1}

\Big)$$

Note that to obtain a complete orthonormal basis, we will have to convert $\boldsymbol{v}_r$ into a unit vector as well. This completes the proof.

■

Theorem.

Dot product of an original basis vector and the corresponding orthonormal basis vector

Let $\{\boldsymbol{w}_1,\boldsymbol{w}_2,

\cdots,\boldsymbol{w}_r\}$ be any basis for a subspace $W$. Let $\{\boldsymbol{v}_1,

\boldsymbol{v}_2,\cdots,

\boldsymbol{v}_r\}$ be an orthogonal basis constructed using the Gram-Schmidt process and let $\{\boldsymbol{q}_1,\boldsymbol{q}_2,\cdots,\boldsymbol{q}_r\}$ be the corresponding orthonormal basis.

For $i=1,2,\cdots,r$, we have that:

$$\boldsymbol{w}_i\cdot\boldsymbol{q}_i=0$$

Proof. Suppose we use the variantlink of the Gram-Schmidt process in which we build up an orthonormal basis at every step. The $i$-th orthogonal basis vector is constructed by:

$$\begin{align*}

\boldsymbol{v}_i

&=\boldsymbol{w}_i-

(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}

\end{align*}$$

Multiplying both sides by $\frac{1}{\Vert\boldsymbol{v}_i\Vert}$ gives:

$$\begin{align*}

\frac{1}{\Vert\boldsymbol{v}_i\Vert}\boldsymbol{v}_i

&=\frac{1}{\Vert\boldsymbol{v}_i\Vert}

\Big(\boldsymbol{w}_i-

(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}

\Big)

\end{align*}$$

The left-hand side is equal to the unit vector $\boldsymbol{q}_i$ by definitionlink and so:

$$\begin{align*}

\boldsymbol{q}_i

&=\frac{1}{\Vert\boldsymbol{v}_i\Vert}

\Big(\boldsymbol{w}_i-

(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}

\Big)\\

\Vert\boldsymbol{v}_i\Vert\boldsymbol{q}_i

&=\boldsymbol{w}_i-

(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}\\

\boldsymbol{w}_i&=

\Vert\boldsymbol{v}_i\Vert\boldsymbol{q}_i

+(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}

\end{align*}$$

Now, performing a dot product with $\boldsymbol{q}_i$ on both sides gives:

$$\begin{align*}

\boldsymbol{w}_i\cdot\boldsymbol{q}_i&=

\Big(\Vert\boldsymbol{v}_i\Vert\boldsymbol{q}_i

+(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

\boldsymbol{q}_1+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)\boldsymbol{q}_2+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})\boldsymbol{q}_{i-1}\Big)

\cdot\boldsymbol{q}_i\\

&=\Vert\boldsymbol{v}_i\Vert(\boldsymbol{q}_i\cdot\boldsymbol{q}_i)

+(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

(\boldsymbol{q}_1\cdot\boldsymbol{q}_i)+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)(\boldsymbol{q}_2\cdot\boldsymbol{q}_i)

+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})

(\boldsymbol{q}_{i-1}\cdot\boldsymbol{q}_i)\\

&=\Vert\boldsymbol{v}_i\Vert\Vert\boldsymbol{q}_i\Vert^2

+(\boldsymbol{w}_i\cdot\boldsymbol{q}_1)

(0)+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_2)

(0)

+\cdots+

(\boldsymbol{w}_i\cdot\boldsymbol{q}_{i-1})

(0)\\

&=\Vert\boldsymbol{v}_i\Vert(1)^2\\

&=\Vert\boldsymbol{v}_i\Vert

\end{align*}$$

This completes the proof.

■

Theorem.

Orthogonal relationship between original basis vectors and orthogonal basis vectors

Let $\{\boldsymbol{w}_1,\boldsymbol{w}_2,\cdots,\boldsymbol{w}_n\}$ be any basis for the subspace $W$. If $\{\boldsymbol{v}_1,\boldsymbol{v}_2,\cdots,\boldsymbol{v}_n\}$ is the corresponding orthogonal basis constructed using the Gram-Schmidt process, then $\boldsymbol{w}_i$ is orthogonal to vectors $\boldsymbol{v}_{i+1}$, $\boldsymbol{v}_{i+2}$, $\cdots$, $\boldsymbol{v}_{n}$ for $i=1,2,\cdots,n-1$.

Proof. Recall that we start the Gram-Schmidt process by setting $\boldsymbol{v}_1=\boldsymbol{w}_1$. We then select $\boldsymbol{v}_2$ such that $\boldsymbol{v}_2$ is orthogonal to the subspace $W_1$ spanned by $\boldsymbol{v}_1$. Since $\boldsymbol{w}_1\in{W}_1$, we have that:

$$\boldsymbol{w_1}

\;\text{ is orthogonal to }\;

\boldsymbol{\boldsymbol{v}_2}$$

In the 2nd step, we select $\boldsymbol{v}_3$ such that $\boldsymbol{v}_3$ is orthogonal to the subspace $W_2$ spanned by $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$. Recall the relationship between $\boldsymbol{w}_2$ and $\boldsymbol{v}_2$ below:

$$\boldsymbol{w}_2=

\boldsymbol{v}_2+\mathrm{Proj}_{W_1}(\boldsymbol{w}_2)$$

Where $\mathrm{Proj}_{W_1}(\boldsymbol{w}_2)\in{W_1}$. Because $W_1$ is spanned by $\boldsymbol{v}_1$, we know that $\mathrm{Proj}_{W_1}(\boldsymbol{w}_2)$ can be expressed as some linear combination of $\boldsymbol{v}_1$. This means that $\boldsymbol{w}_2$ can be expressed as some linear combination of $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$. Because $W_2$ is spanned by $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$, we have that $\boldsymbol{w}_2\in{W_2}$. Also $\boldsymbol{w}_1\in{W_2}$ since $\boldsymbol{w}_1\in{W_1}$ where $W_1$ is spanned by $\boldsymbol{v}_1$. Because $\boldsymbol{w}_1,\boldsymbol{w}_2\in{W_2}$ and $\boldsymbol{v}_3$ is orthogonal to any vector in $W_2$, we have that:

$$\begin{align*}

&\boldsymbol{w_1}

\;\text{ is orthogonal to }\;

\boldsymbol{v}_2, \boldsymbol{v}_3\\

&\boldsymbol{w_2}

\;\text{ is orthogonal to }\;

\boldsymbol{v}_3\\

\end{align*}$$

In the 3rd step, we select $\boldsymbol{v}_4$ such that $\boldsymbol{v}_4$ is orthogonal to the subspace $W_3$ spanned by $\boldsymbol{v}_1$, $\boldsymbol{v}_2$ and $\boldsymbol{v}_3$. Recall the relationship between $\boldsymbol{v}_3$ and $\boldsymbol{w}_3$ below:

$$\boldsymbol{w}_3=

\boldsymbol{v}_3+\mathrm{Proj}_{W_2}(\boldsymbol{w}_3)

$$

Where $\mathrm{Proj}_{W_2}(\boldsymbol{w}_3)\in{W_2}$. This means that $\boldsymbol{w}_3$ can be expressed as some linear combination of $\boldsymbol{v}_3$ and the basis vectors of $W_2$, which we know are $\boldsymbol{v}_1$ and $\boldsymbol{v}_2$. Because $W_3$ is spanned by $\boldsymbol{v}_1$, $\boldsymbol{v}_2$ and $\boldsymbol{v}_3$, we have that $\boldsymbol{w}_3\in{W_3}$. Next, we already established that:

Therefore, we have that $\boldsymbol{w}_1,\boldsymbol{w}_2,\boldsymbol{w}_3\in{W_3}$. The vector $\boldsymbol{v}_4$ is orthogonal to any vector in $W_3$, which means that:

$$\begin{align*}

&\boldsymbol{w_1}

\;\text{ is orthogonal to }\;

\boldsymbol{v}_2,\boldsymbol{v}_3,\boldsymbol{v}_4\\

&\boldsymbol{w_2}

\;\text{ is orthogonal to }\;

\boldsymbol{v}_3,\boldsymbol{v}_4\\

&\boldsymbol{w_3}

\;\text{ is orthogonal to }\;

\boldsymbol{v}_4\\

\end{align*}$$

In general, in the $j$-th step, we add a vector $\boldsymbol{v}_{j+1}$ into the list of orthogonal vectors of $\boldsymbol{w}_1$, $\boldsymbol{w}_2$, $\cdots$, $\boldsymbol{w}_{j}$. In other words, $\boldsymbol{w}_i$ is orthogonal to $\boldsymbol{v}_{i+1}$ for $i=1,2,\cdots,n-1$. This completes the proof.

■