Uploading a file on Databricks and reading the file in a notebook

Start your free 7-days trial now!

In this guide, we will go through the steps of uploading a simple text file on Databricks, and then reading this file using Python in a Databricks notebook.

Uploading a file on Databricks

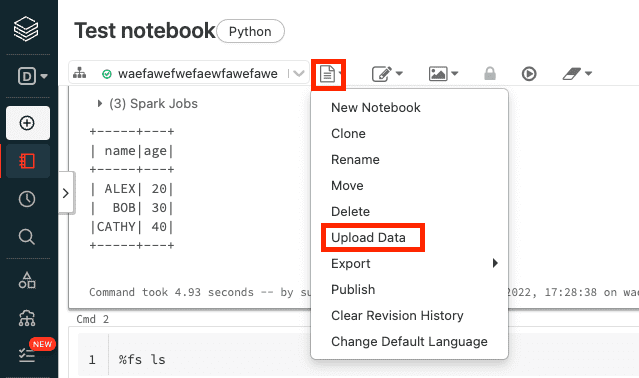

To upload a file on Databricks, click on Upload Data:

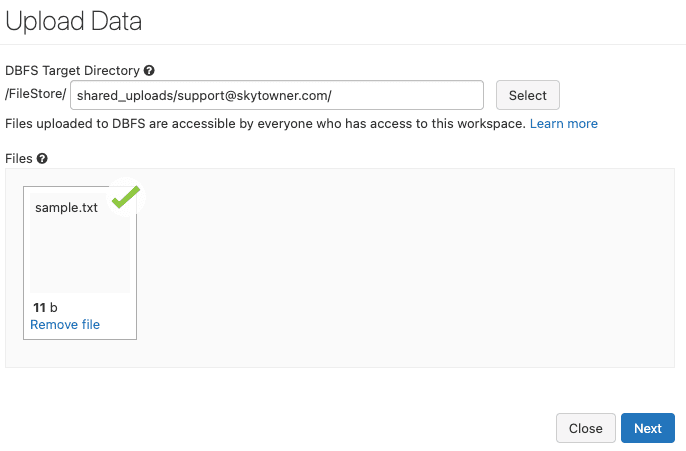

Here, even though the label is Upload Data, the file does not have to contain data (e.g. CSV file) - it can be any file like a JSON file.

Next, select the file that you wish to upload, and then click on Next:

Here, we'll be uploading a text file called sample.txt.

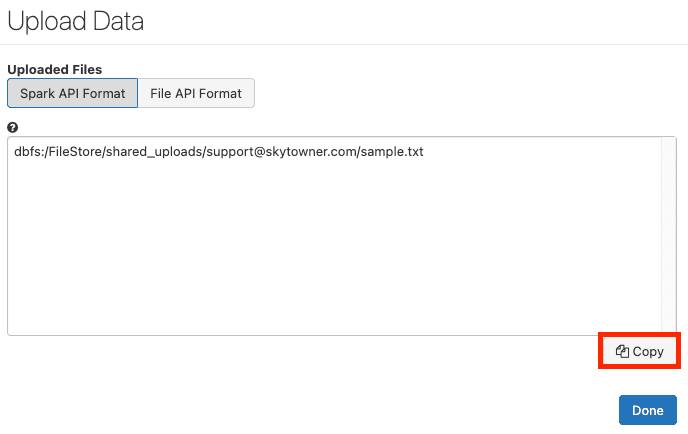

Next, copy the file path in Spark API Format:

Finally, click on Done - the text file has now been uploaded to dbfs, which is Databricks' file system.

Copying file from DBFS to local file system on driver node

The problem with dbfs is that the file in dbfs cannot be directly accessed in Python code. Therefore, we must copy this file over to the standard file system of the driver node like so:

# The dbfs path that you copied in the previous stepdbfs_path = 'dbfs:/FileStore/shared_uploads/support@skytowner.com/sample.txt'local_path = 'file:///tmp/sample.txt'# Copy (cp) the file from dbfs to local file system in driver nodedbutils.fs.cp(dbfs_path, local_path)

True

After running this code, the sample.txt will be written to the local file system on the driver node in the root tmp folder:

ls /tmp

...sample.txt...

Reading the file using Python

Finally, we can read the content of the file using Python like so:

Hello world

Here, we see that the content of the text file is 'Hello world'.